This thesis builds a foundation for the philosophy of the Web by

examining the crucial question: What does a Uniform Resource Identifier

(URI) mean? Does it have a sense, and can it refer to things? A

philosophical and historical introduction to the Web explains the

primary purpose of the Web as a universal information space for naming

and accessing information via URIs. A terminology, based on distinctions

in philosophy, is employed to define precisely what is meant by

information, language, representation, and reference. These terms are

then employed to create a foundational ontology and principles of Web

architecture. From this perspective, the Semantic Web is then viewed as

the application of the principles of Web architecture to knowledge

representation. However, the classical philosophical problems of sense

and reference that have been the source of debate within the philosophy

of language return. Three main positions are inspected: the logicist

position, as exemplified by the descriptivist theory of reference and

the first-generation Semantic Web, the direct reference position, as

exemplified by Putnam and Kripke's causal theory of reference and the

second-generation Linked Data initiative, and a Wittgensteinian position

that views the Semantic Web as yet another public language. After

identifying the public language position as the most promising, a

solution of using people's everyday use of search engines as relevance

feedback is proposed as a Wittgensteinian way to determine sense of

URIs. This solution is then evaluated on a sample of the Semantic Web

discovered by via using queries from a hypertext search engine query

log. The results are evaluated and the technique of using relevance

feedback from hypertext Web searches to determine relevant Semantic Web

URIs in response to user queries is shown to considerably improve

baseline performance. Future work for the Web that follows from our

argument and experiments is detailed, and outlines of a future

philosophy of the Web laid out.

To imagine a language means to imagine a form of life. Ludwig Wittgenstein Wittgenstein (1953)

The World Wide Web is without a doubt one of the most significant computational phenomena to date. Yet there are some questions that cannot be answered without a theoretical understanding of the Web. Although the Web is impressive as a practical success story, there has been little in the way of developing a theoretical framework to understand what - if anything - is different about the Web from the standpoint of long-standing questions of sense and reference in philosophy. While this situation may have been tolerable so far, serving as no real barrier to the further growth of the Web, with the development of the Semantic Web, a next generation of the Web ``in which information is given well-defined meaning, better enabling computers and people to work in cooperation,'' these philosophical questions come to the forefront, and only a practical solution to them can help the Semantic Web repeat the success of the hypertext Web Berners-Lee et al. (2001).

There is little doubt that the Semantic Web faces gloomy prospects. On first inspection, the Semantic Web appears to be a close cousin to another intellectual project, known politely as `classical artificial intelligence' (also known as `Good-Old Fashioned AI'), an ambitious project whose progress has been relatively glacial and whose assumptions have been found to be cognitively questionable Clark (1997). The initial bet of the Semantic Web was that somehow the Web part of the Semantic Web would somehow overcome whatever problems the Semantic Web inherited from classical artificial intelligence, in particular, its reliance on logic and inference as the basis of meaning Halpin (2004). However, progress on the Semantic Web has also been relatively slow over the last decade. Both new techniques and large amounts of data have not yet caused the Semantic Web to repeat the phenomenal success of the hypertext Web.

In order to even understand the astounding ascent of the Web we have to understand what fundamental component serves as its foundation. While we will go into this question in much greater detail in Chapter 4, tentatively we propose that the Web consists of a space of names called Uniform Resource Identifiers (URIs), a unique identifier whose syntax is given in Berners-Lee et al. Berners-Lee et al. (2005). Familiar examples of URIs include URIs for accessing web-pages, such as http://www.example.org, although even something as simple as a telephone number can be given a URI such as tel:+1-816-555-1212. It is precisely the use of URIs as their fundamental element that makes both the hypertext and Semantic Web part of the Web.

The first problem that is self-evident to anyone who actually attempts to deploy any `real world' data on the Semantic Web is that there is little guidance on how to identify data using URIs, as well as what information to allow access to from these URIs. For a long time, this question was unanswered, and recently has only been cryptically answered Sauermann and Cygniak (2008). The second self-evident problem that is unavoidable to anyone using the Semantic Web for data integration is that different people create different URIs for the same thing. Recently, a set of principles known as `Linked Data' have given some guidance, but only on a superficial level Bizer et al. (2007).

The essential bet of the Semantic Web is that decentralized agents will come to an agreement on using the same URI to name a thing, including things that aren't accessible on the Web, like people, places, and abstract concepts. Yet there is virtually no ability to even find URIs for things on the Semantic Web. Currently, each application creates its own new URI for a thing, repeating the localism of classical artificial intelligence. Furthermore, it appears that most things either have no URIs or far too many.

The scientific hypothesis of this thesis must be stated in a two-fold fashion, first to state the problem and then to propose a solution. The problem is the simple question: What is the meaning of a URI?. In order to analyze this problem further, we will propose that the Semantic Web is a kind of language that can be defined by its conformance to the principles of Web architecture, but nonetheless determining the meaning of a URI decomposes into a theory of sense and reference, so the Semantic Web inherits the classical problems regarding sense and reference from the philosophy of natural language. Our proposed solution is then that a theory of sense and reference suitable to encourage identifier re-usage on the Web can be implemented by employing relevance feedback from search engine results.

In order to orient the reader to the Web, we give a brief introduction to its history and significance in Chapter 2. We then introduce the philosophical terminology that serves as the foundation the thesis in Chapter 3. Finally, we use this terminology to give an exegesis of Web architecture in Chapter 4. In Chapter 5 we propose that the Semantic Web, at least as embodied by the Resource Description Framework (RDF), is a kind of URI-based knowledge representation language for data integration based on the principles of Web architecture.

We address current theories of sense and reference in Chapter 6 and propose a neo-Wittgensteinian theory of sense and meaning for the Web in Chapter 8. There are three distinct positions to this question on the Semantic Web, each corresponding to a distinct philosophical theory of reference. The first response is the logicist position, which states that the referent(s) of a URI is determined by whatever model(s) satisfy the formal semantics of the Semantic Web Hayes (2004). This answer is identified with both the formal semantics of the Semantic Web itself and the traditional Russellian theory of names and its descriptivist descendants Russell (1905). While this answer may be sufficient for automated inference engines, this answer is insufficient for humans, as it often crucially under-determines what kind of things the URI refers to. As the prevailing position in early Semantic Web research, this position has borne little fruit. Another response is the direct reference position for the Web, which states that the meaning of a URI is whatever was intended by the owner. This answer is identified with the intuitive understanding of many of the original Web architects like Berners-Lee and a special case of Putnam's `natural kind' theory of meaning. This position is also nearly identical to Kripke's famous response to Russell, the causal theory of reference Kripke (1972); Putnam (1975).

In Chapter 7, we describe a search engine query log from a major hypertext search engine (Microsoft Live.com), and how we derive query terms for people, places, and abstract concepts from this query log and then use those to derive Semantic Web URIs. From this query-driven analysis of the deployed Semantic Web, we empirically demonstrate that following the principles of Web architecture and endorsing the direct reference position does not lead to URI re-usage, but that instead there are still likely to be multiple URIs for the same thing and that it is not easy for users to retrieve these URIs in response to a query given as keywords to a search engine. We finally turn to the third position, the public language position, which states that since the Semantic Web is a form of language and as a language exists as a mechanism for co-ordination among multiple agents, then the meaning of a URI is the use of the URI by a community of agents. As vague as this position seems at first glance, we argue this analysis of sense and reference is the best fit to how natural language works, and it supersedes and even subsumes the two other positions. While there are `semiotic' theories of reference, we will not inspect these in this thesis, although we believe that these theories can be incorporated into a public language position. As this theory of meaning works for natural language, it follows that it is a good bet for the Semantic Web, for the Semantic Web is just a form of language, albeit an unusual one.

The public language position implies a public mechanism that lets agents in turn create, find, and re-use URIs. While it may be intuitively correct to endorse a neo-Wittgensteinian theory of meaning for the Semantic Web, this does little for the Semantic Web if a practical implementation can not be demonstrated. As Wittgenstein would say, one must remember that every ``language game'' comes with a ``form of life'' Wittgenstein (1953). Without a doubt, one activity that seems to be prevalent among users of the Web is searching for web-pages using natural language query terms via a search engine Halpin and Thompson (2005). Therefore, the obvious solution to the problem of finding out what a URI means is to take advantage of current search engines. Chapter 8 details on a high-level of abstraction a design for an implementation of determining URI meaning based on relevance feedback from users of keyword-based hypertext search engines. This puts the the Semantic Web in a ``virtuous cycle'' with the behavior of users on the hypertext Web Baeza-Yates (2008). Our implementation is then tested with real data and real users in Chapter 9, and we show how our results improve various baseline systems for the information retrieval of Semantic Web URIs. Finally in Chapter 10 we summarize the work so far and discuss the advantages and limitations of our particular proposed solution. We also present plans for future work as well as further philosophical questions that arise from the thesis.

Each of these chapters builds upon each other to make the thesis complete as a whole. Readers interested in particular subjects may wish to focus their attention on particular components, although they are warned that concepts and findings developed in earlier chapters are referred to in later chapters. As the nature of the project is in an interdisciplinary and emergent area, there is no singular and comprehensive literature review in a separate chapter, but instead the literature is reviewed and mentioned as necessary throughout the thesis.

This thesis is explicitly limited in scope, concentrating only on the terminology necessary to phrase a single, if broad, question: ``How can we determine the meaning of a URI on the Semantic Web?'' Although the thesis is interdisciplinary, as it involves elements as diverse as the philosophy of language and machine-learning, these elements are only harnessed insofar as they are necessary to phrase our central hypothesis and present a possible solution.

Due to this constraint, this thesis is not an attempt to develop a philosophy of computation Smith (2002a), or a philosophy of information Floridi (2004), or even a comprehensive ``philosophy of the Web'' Halpin (2008b). These are much larger projects outside the scope of a single thesis, and even a single individual. However, in combination with the fully-formed work in the philosophy of mind and language, we hope that at least this thesis provides a starting point for future work in these areas. So we use notions from philosophy selectively, and then define the terms in lieu of our goal of articulating the principles of Web architecture and the Semantic Web, rather than attempting to articulate or define the terms of a systematic philosophy of the Web. Many of the philosophical terms in this thesis could be explored much further, but are necessarily not explored, as to constrain the thesis to a reasonable size. Unlike a philosophical thesis, counter-arguments and arguments are generally not given for terminological definitions, but instead references are given to the key works that explicate these notions further.

This thesis does not inspect every single possible answer to the question of What is the meaning of a URI?, but only three distinct positions. An inspection of every possible theory of meaning and reference is beyond the scope of the thesis, as is an inspection of the tremendous secondary literature that has accrued over the years for even those limited viewpoints that we do inspect in Chapter 6 and Chapter 8. Instead, we will focus only on theories of meaning and reference that have been brought up explicitly in the various arguments over this question in the Web by the primary architects of the Web and the Semantic Web. Our proposed solution rests on a theory of meaning based on Wittgensteinian, one that is one of the most infamously dense and infuriatingly obscure treatments of sense and reference.

Finally, while the experimental component has done its best to be realistic, it is in no way complete. Pains have been taken to ensure that the experiment, unlike much work in the Semantic Web, at least uses real data, feedback from real users, and is properly evaluated over a wide range of algorithms and parameters. Yet a real implementation of our proposed solution would require full-scale implementation and co-operation of both a major hypertext search engine and a Semantic Web search engine. Obviously, this is beyond the means of a thesis, as is any foundational or even ground-breaking work in information retrieval. Instead, we show how information retrieval can be applied to the Semantic Web to help solve one of its most difficult problems. While various parts of the experiment could no doubt be optimized and scaled up still further, for a proof-of-concept solution to a very difficult problem, this experiment should be sufficient.

In order to aid the reader, the thesis employs a number of notational conventions. In particular, we only use ``double'' quotes to quote a particular author or other work. When a new word is introduced and deployed in an unusual manner to be clarified later, we use `single' quotes. The use of `single' quotes is also used when a word is supposed to be understood as the word qua word, a mention of the word, rather than a use of the word. When a term is defined, the word is first labeled using bold and italic fonts, and either immediately followed or preceded by the definition given in italics. Mathematical or formal terms are ![]() , as is the use of emphasis in any sentence. Finally, the names of books and other large works are often italicized. In general, technical terms like HTTP are often abbreviated by their capitalized initials. One of the largest problems of this whole area historically has been a rather ad-hoc use of terms, and we hope this fairly rigorous notational convention helps separate the use, mention, definition, and direct quotations of words.

, as is the use of emphasis in any sentence. Finally, the names of books and other large works are often italicized. In general, technical terms like HTTP are often abbreviated by their capitalized initials. One of the largest problems of this whole area historically has been a rather ad-hoc use of terms, and we hope this fairly rigorous notational convention helps separate the use, mention, definition, and direct quotations of words.

Despite its ambitious title, this thesis is a modest attempt to both articulate and apply the principles of Web architecture in order to answer a question at the heart of the Semantic Web: What does a URI mean? We provide a solution by analyzing the primary positions in philosophy of language and Web architecture, and by constructing a proof-of-concept solution. We do not claim to provide a complete or unique solution, but do argue our solution is better than other competing positions and solutions, in particular in lieu of our implementation. We do not claim to have solved any of these problems regarding meaning and reference for language in general, especially natural language, and are fully confident that philosophers will continue arguing over these issues for at least the next century. We do present a proof-of-concept solution for these problems of meaning and reference in the special and limiting case of the Semantic Web.

If we could rid ourselves of all pride, if to determine our species we kept strictly to what historic and prehistoric periods show us to be the constant characteristic of man and of intelligence, we should not say Homo Sapiens but Homo Faber. In short, intelligence, considered in what seems to be its original feature, is the faculty of manufacturing artificial objects, especially tools for making tools. Henri Bergson Bergson (1911)

The subject matter of this thesis is the nature of sense and reference on the World Wide Web, and this chapter provides the necessary background information to motivate the thesis and to make the central hypothesis of the thesis comprehensible. In this thesis, we consider the World Wide Web (from hereon referred to only as `the Web') as a first-class subject matter for study. The first chapter delves into the origins of the Web so that the question of meaning and reference on the Web can be understood in its proper context.

Why the Web? Why not look at more interesting problems in a subject like artificial intelligence? In his One Hundred Billion Lines of C++, computer scientist-turned-philosopher Brian Cantwell Smith notes that the models of computing used in debates over reference and representation tend to frame the debate as if it were between ``classical'' logic-based symbolic reasoners and some ``connectionist'' and ``embodied'' alternative ranging from neural networks to epigenetic robotics Smith (1997). Smith then goes on to aptly state that the kinds of computational systems discussed in artificial intelligence and philosophy tend to ignore the vast majority of existing systems, for ``it is impossible to make an exact estimate, but there are probably something on the order of 10, or one hundred billion lines of C++ in the world. And we are barely started. In sum: symbolic AI systems constitute approximately 0.01% of written software'' Smith (1997). The same small fraction likely holds true of ``non-symbolic AI'' computational systems such as robots, artificial life, and connectionist networks. While numbers by themselves hold little intellectual weight, one could always argue that the vast majority of computational systems may have no impact on our understanding of representation and intelligence. In this thesis we argue otherwise. The wide class of computational systems present a ``middle distance'' where questions of reference, representation, and intelligence come to the forefront and may even be more tractable than in the case for humans Smith (1995). One of the the most significant members to date of this wider class of computational systems is the World Wide Web, described by Tim Berners-Lee, the person widely acclaimed to be the `inventor' of the Web, as ``a universal information space''Berners-Lee et al. (1992).

Michael Wheeler, a philosopher who is well-known for his Heideggerian defense of embodiment, surmises that ``the power of the Web as a technological innovation is now beyond doubt'' but ``what is less well appreciated is the potential power of the Web to have a conceptual impact on cognitive science'' and so this thesis may provide a new ``fourth way'' in addition to the ``three kinds of cognitive science or artificial intelligence: classical, connectionist, and (something like) embodied-embedded'' Wheeler (2008). While countless papers have been produced on the technical aspects of the Web, very little has been done explicitly on the Web qua Web as a subject matter. This does not mean there has not been interest, although the interest has come in particular more from the side of those working on developing the Web rather than those already entrenched in philosophy, linguistics, and artificial intelligence. In particular, the workshop series on Identity, Reference, and the Web has provoked many articles on these topics from prominent Web architects, although not from philosophers per se Bouquet et al. (2007b); Halpin et al. (2006); Bouquet et al. (2008). In this spirit, what we will undertake in this thesis as a whole is to apply many well-known philosophical theories of reference and representation to the phenomenon of the Web.

In order to establish the relative autonomy of the Web as a subject matter, we recount its origins and so its relationship to other projects, both intellectual such as Engelbart's Human Augmentation Project, as well as more purely technical projects such as the Internet Engelbart (1962). It may seem odd to begin out this thesis, which involves very specific questions about meaning and reference on the Web, with a thorough history of the Web. To understand these questions we must first have an understanding of the boundaries of the Web and the normative documents that define the Web. The Web is a fuzzy and ill-defined subject matter whose precise boundaries and even definition are unclear. Unlike some subject matters like chemistry, the subject matter of the Web is not necessarily very stable, for the Web is not a `natural kind,' as it is a technical artifact. So we will take the advice of the philosopher of technology Gilbert Simondon, ``Instead of starting from the individuality of the technical object, or even from its specificity, which is very unstable, try to define the laws of its genesis in the framework of this individuality or specificity, it is better to invert the problem: It is from the criterion of the genesis that we can define the individuality and the specificity of the technical object: the technical object is not this or that thing, given hic et nunc but that which is generated'' Simondon (1958). In other words, we must first trace the creation of the Web before attempting to define it, imposing on the Web what Fredric Jameson calls ``the one absolute and we may even say transhistorical imperative, that is: Always historicize!'' Jameson (1981). We build on the work of this chapter in Chapter 4 to delineate the precise principles of the Web.

What is the Web, and what is its significance? At first, it appears to be a relative upstart upon the historical scene, with little connection to anything before it, an ahistorical and unprincipled `hack' that came unto the world unforeseen and with dubious academic credentials. The purpose of this section is to dispel this myth.

The intellectual trajectory of the Web is a fascinating, if mostly unknown, history. Although it is well-known that the Web bears some striking similarity to Vannevar Bush's `Memex' idea from 1945 Bush (1945), the Web is itself usually thought more of as a technological innovation rather than an intellectually rich subject matter such as artificial intelligence or cognitive science. However, the Web's heritage is just as rich as artificial intelligence and cognitive science, and can be traced back to the same roots, namely the `Man-Machine Symbiosis' project of Licklider Licklider (1960). The `Man-Machine Symbiosis' project gave birth to two streams of research. The first strand is that of artificial intelligence done in the spirit of McCarthy, Minsky, and others involved in the original Dartmouth proposal McCarthy et al. (1955). However, there exists another lesser-known strand of research, the work on `human augmentation' exemplified by the work of Engelbart that eventually gave us both the mouse and the Internet Engelbart (1962). Human augmentation, instead of hoping to replicate human intelligence as artificial intelligence did, only thought to enhance it. The Web itself is a descendant of Engelbart's vision, and this historical trajectory leading from Licklider to the creation of the Web, is detailed in the following sections.

The first precursor to the Web was glimpsed, although never implemented, by Vannevar Bush. For Bush, the primary barrier to increased productivity was the lack of an ability to easily recall and create records, and Bush saw in microfiche the basic element needed to create what he termed the ``Memex,'' a system that lets any information be stored, recalled, and annotated through a series of ``associative trails'' Bush (1945). The Memex would lead to ``wholly new forms of encyclopedias with a mesh of associative trails,'' a feature that became the inspiration for ``linking'' in hypertext Bush (1945). However, Bush could not implement his vision on the analogue computers of his day.

The Web had to wait for the invention of digital computers and networks, both of which bear some debt to the work of J.C.R. Licklider, a disciple of Norbert Wiener Licklider (1960). Wiener thought of feedback as an overarching principle of organization in any science, and one that was equally universal among humans and machines Wiener (1948). Licklider expanded this notion of feedback loops to a vision of low-latency feedback between humans and digital computers. The intellectual project of `Man-Machine Symbiosis' is distinct and prior from cognitive science and artificial intelligence, both of which hypothesize that the human mind can be construed as either computational itself or even implemented on a computer. Licklider held that while the human mind itself might not be computational (although Licklider cleverly remained agnostic on that particular gambit), the human mind was definitely complemented by computers. As Licklider himself put it, ``The fig tree is pollinated only by the insect Blastophaga grossorun. The larva of the insect lives in the ovary of the fig tree, and there it gets its food. The tree and the insect are thus heavily interdependent: the tree cannot reproduce without the insect; the insect cannot eat without the tree; together, they constitute not only a viable but a productive and thriving partnership. This cooperative `living together in intimate association, or even close union, of two dissimilar organisms' is called symbiosis. The hope is that, in not too many years, human brains and computing machines will be coupled together very tightly, and that the resulting partnership will think as no human brain has ever thought and process data in a way not approached by the information-handling machines we know today'' Licklider (1960). The goal of `Man-Machine Symbiosis' is then the enabling of reliable coupling between the humans and their `external' information as given in digital computers. To obtain this coupling, the barriers of time and space needed to be overcome so that the symbiosis could operate as a single process.

The `Man-Machine Symbiosis' project was not merely an philosophical project, but an engineering project. In order to provide the funding needed to assemble what Licklider termed his ``galactic network'' of researchers to implement the first step of the project, Licklider became the institutional architect of the Information Processing Techniques Office at the Advanced Research Projects Agency (ARPA) Waldrop (2001). Licklider first tackled the barrier of time. Early computers had large time lags in between the input of a program to a computer on a medium such as punch-cards and the reception of the program's output. This lag could then be overcome via the use of time-sharing, taking advantage of the fact that the computer, despite its centralized single processor, could run multiple programs in a non-linear fashion. Instead of idling while waiting for the next program or human interaction, in moments nearly imperceptible to the human eye, a computer would share its time among multiple humans McCarthy (1992).

Douglas Engelbart had independently generated a proposal for a ``Human Augmentation Framework' that shared the same goal as the `Man-Machine Symbiosis' project of Licklider, although it differed by placing the human at the center, focusing on the ability of the machine to extend to the human user, while Licklider imagined a more egalitarian partnership between humans and digital computers Engelbart (1962). This focus on human factors led Engelbart to the realization that the primary reason for the high latency between the human and the machine was the interface of the human user to the machine itself, as a keyboard was at best a limited channel. After extensive testing of what devices enabled the lowest latency between humans and machines, Engelbart invented the mouse and other, less successful interfaces, like the one-handed `chord' keyboard Waldrop (2001). By employing these interfaces, the temporal latency between humans and computers was decreased even further.

The second barrier to be overcome was space, so that any computer should be accessible regardless of its physical location. The Internet ``came out of our frustration that there were only a limited number of large, powerful research computers in the country, and that many research investigators who should have access to them were geographically separated from them'' Leiner et al. (2003). Licklider's lieutenant Bob Taylor and his successor Larry Roberts contracted out Bolt, Beranek, and Newman (BBN) to create the Interface Message Processor, the hardware needed to connect the various time-sharing computers of Licklider's ``galactic network'' that evolved into the ARPANet Waldrop (2001). While BBN provided the hardware for the ARPANet, the software was left undetermined, so an informal group of graduate students constituted the Internet Engineering Task Force (IETF) to create software to run the Internet Waldrop (2001).

The IETF has historically been the main body that creates the protocols that run the Internet. It still maintains the informal nature of its foundation, with no formal structure such as a board of directors, although it is officially overseen by the Internet Society. The IETF informally credits as their main organizing principle the credo ``We reject kings, presidents, and voting. We believe in rough consensus and running code'' Hafner and Lyons (1996). Decisions do not have to be ratified by consensus or even majority voting, but require only a rough measure of agreement on an idea. The most important product of these list-serv discussions and meetings are IETF RFCs (Request for Comments) which differ in their degree of reliability, from the unstable `Experimental' to the most stable `Standards Track.' The RFCs define Internet standards such as URIs and HTTP Berners-Lee et al. (2005,1996). RFCs, while not strictly academic publications, have a de facto normative force on the Internet and therefore on the Web, and so they will be referenced considerably throughout this thesis.

Before the Internet, networks were assumed to be static and closed systems, so one either communicated with a network or not. However, early network researchers determined that there could be ``open architecture networking'' where a meta-level ``internetworking architecture'' would allow diverse networks to connect to each other, so that ``they required that one be used as a component of the other, rather than acting as a peer of the other in offering end-to-end service'' Leiner et al. (2003). In the IETF, Robert Kahn and Vint Cerf devised a protocol that took into account, among others, four key factors, as cited below Leiner et al. (2003):

In this protocol, data is subdivided into `packets' that are all treated independently by the network. Data is first divided into relatively equal sized packets by TCP (Transmission Control Protocol), which then sends the packets over the network using IP (Internet Protocol). Together, these two protocols form a single protocol, TCP/IP Cerf and Kahn (1974). Each computer is named by an Internet Number, a four byte destination address such as 152.2.210.122, and IP routes the system through various black-boxes, like gateways and routers, that do not try to reconstruct the original data from the packet. At the recipient's end, TCP collects the incoming packets and then reconstructs the data.

The Internet connects computers over space, and so provides the physical layer over which the ``universal information space'' of the Web is implemented. However, it was a number of decades before the latency of space and time became low enough for the Web to become not only universalizing in theory, but universalizing in practice. An historical example of attempting a Web-like system before the latency was acceptable would be the NLS (oNLine System) of Engelbart Engelbart (1962). The NLS was literally built as the second node of the Internet, the Network Information Center, the ancestor of the domain name system. The NLS allowed any text to be hierarchically organized in a series of outlines with summaries, giving the user freedom to move through various levels of information and link information together. The most innovative feature of the NLS was a journal for users to publish information in and a journal for others to comment upon, a precursor of blogs and wikis Waldrop (2001).

However, Engelbart's vision could not be realized on the slow computers of his day. Although time-sharing computers reduced temporal latency on single machines, too many users sharing a single machine made the latency unacceptably high, especially when using an application like NLS. Furthermore, his zeal for reducing latency made the NLS far too difficult to use, as it depended on obscure commands that were far too complex for the average user to master within a reasonable amount of time Bardini (2000). It was only after the failure of the NLS that researchers at Xerox PARC developed the personal computer, which by providing each user their own computer reduced the temporal latency to an acceptable amount Waldrop (2001). When these computers were connected with the Internet and given easy-to-use interfaces as developed at Xerox PARC, both temporal and spatial latencies were made low enough for ordinary users to access the Internet. This convergence of technologies, the personal computer and the Internet, is what allowed the Web to be implemented successfully and enabled its wildfire growth, while previous attempts like NLS were doomed to failure as they were conceived before the technological infrastructure to support them had matured.

Perhaps due to its own anarchic nature, the IETF had produced a multitude of incompatible protocols such as FTP (File Transfer Protocol) and Gopher Anklesaria et al. (1993); Postel and Reynolds (1985). While protocols could each communicate with other computers over the Internet, there was no universal format to identify information regardless of protocol. One IETF participant, Tim Berners-Lee, had the concept of a ``universal information space'' which he dubbed the ``World Wide Web'' Berners-Lee et al. (1992). His original proposal to his employer CERN brings his belief in universality to the forefront, ``We should work towards a universal linked information system, in which generality and portability are more important than fancy graphics and complex extra facilities'' Berners-Lee (1989). The practical reason for Berners-Lee's proposal was to connect the tremendous amounts of data generated by physicists at CERN together. Later as he developed his ideas, Berners-Lee came into direct contact with Engelbart, who encouraged him to continue with the idea of the Web despite his academic work being rejected at conferences like ACM Hypertext 1991.1

In the IETF, Berners-Lee, Fielding, Connolly, Masinter, and others spear-headed the development of URIs (Universal Resource Identifiers), HTML (HyperText Markup Language) and HTTP (HyperText Transfer Protocol). By being able to reference anything with equal ease due to URIs, a web of information would form based on ``the few basic, common rules of `protocol' that would allow one computer to talk to another, in such a way that when all computers everywhere did it, the system would thrive, not break down'' Berners-Lee (2000). The Web is a virtual space for naming information built on top of the physical infrastructure of the Internet that could move bits around, and the Web was built through specifications that could be implemented by anyone, ``What was often difficult for people to understand about the design was that there was nothing else beyond URIs, HTTP, and HTML. There was no central computer `controlling' the Web, no single network on which these protocols worked, not even an organization anywhere that `ran' the Web. The Web was not a physical `thing' that existed in a certain `place.' It was a `space' in which information could exist'' Berners-Lee (2000).

The very idea of a universal information space seemed at least ambitious, if not de facto impossible, to many. The IETF rejected Berners-Lee's idea that any identification scheme could be universal. In order to get the initiative of the Web off the ground, Berners-Lee surrendered to the IETF and changed the name of his universal naming system from Universal Resource Identifiers (URIs) to Uniform Resource Locators (URLs) Berners-Lee (2000). The Web begin growing at a prodigious rate once the employer of Berners-Lee, CERN, released any intellectual property rights they had to the Web. The growth of the Web increased even more dramatically after Mosaic, the first graphical browser, was released. However, browser vendors started adding supposed `new features' that soon led to a `lock-in' where certain sites could only be viewed by one particular corporate browser. These `browser wars' began to fracture the rapidly growing Web into incompatible information spaces, thus nearly defeating the proposed universality of the Web Berners-Lee (2000).

Berners-Lee in particular realized it was in the long-term interest of the Web to have a new form of standards body that would preserve its universality by allowing corporations and others to have a more structured contribution than possible with the IETF. With the informal position of merit Berners-Lee had as the supposed inventor of the Web (although he freely admits that the invention of the Web was a collective endeavor), he and others constituted the World Wide Web Consortium (W3C); a non-profit dedicated to ``leading the Web to its full potential by developing protocols and guidelines that ensure long-term growth for the Web'' Jacobs (1999). In the W3C, membership was open to any organization, commercial or non-profit organization. Unlike the IETF, W3C membership came at a considerable membership fee. The W3C is organized as a strict representative democracy, with each member organization sending one member to the Advisory Committee of the W3C, although decisions technically are always made by the Director, Berners-Lee himself. By opening up a ``vendor neutral'' space, companies who previously were interested primarily in advancing the technology for their own benefit could be brought to the table. The primary product of the World Wide Web Consortium is a W3C Recommendation, a standard for the Web that is explicitly voted on and endorsed by the W3C membership. W3C Recommendations are thought to similar to IETF RFCs, with normative force due to the degree of formal verification given via voting by the W3C Membership. A number of W3C Recommendations have become very well known technologies, ranging from the vendor-neutral versions of HTML Raggett et al. (1999), which stopped the fracture of the universal information space at the hands of the browser wars, to XML, which has become a prominent transfer syntax for almost any type of data Bray et al. (1998). This thesis will cite W3C Recommendations when appropriate, as these are one of the main normative documents that define the Web. With IETF RFCs, these normative standards collectively define the foundations of the Web. It is by agreement on these standards that the Web functions as a whole. However, the rough-and-ready process of the IETF and even W3C has led to a terminological confusion that must be sorted in order to inspect the problem of how URIs can identify things outside the Web itself.

Philosophy, more rigorously understood, is the discipline that consists of creating concepts. Gilles Deleuze and Felix Guattari Deleuze and Guattari (1991)

A major focus of this thesis is to use terminology from philosophy of computation, language, and the mind to produce a small set of fairly well-defined terms that we can use to express the question: What does a URI refer to? Afterwards, we use these terms to determine what the boundaries of the Web are in Chapter 4 and to clarify the Semantic Web in Chapter 5.

For the sake of brevity we will not in this chapter explore all the nuances and consequences arising from our admittedly broad-sweeping terminology. This is unfortunate, as there is just not enough space to address, much less defuse, all possible counter-arguments. In this manner, this chapter will be decidedly non-philosophical, although we will attempt to mitigate this problem by at least providing references to well-known philosophers from whom we have adopted our terminology, although often we will use their terms in a slightly-modified form so that the terminology may fit the problem at hand. The theoretical framework and terminological definitions given in this chapter provide the foundation for the entire thesis, coming to a head in our proposed solution to the issues of reference and representation on the Semantic Web in Chapter 8. While this chapter may not appear directly relevant to the Web, the philosophical terminology established here will be used to discipline the wild and unruly terminology of Web architecture in the next chapter. Again, we claim neither that our historical and philosophical foundations of Web architecture are complete and systematic, but just systematic and complete enough to pose and solve our hypothesis, without either the question or our solution using vacuous terminology. Otherwise, the result will be terminological confusion, causing any reader to fall into a conceptual swamp of undefined and fuzzy terms like `meaning', `reference', and `representation.' We first explore the notion of `information' at the heart of Berners-Lee's definition of the Web as a `universal information space' and then rebuild a notion of `digitality' and finally `representation' on top of our notion of information, since the Web is composed of not just any representations, but digital representations.

On the surface a term like `representation' seems to be what Brian Cantwell Smith calls ``physically spooky,'' since a representation can refer to something with which it is not in physical contact Smith (1995). This spookiness is a consequence of a violation of common-sense physics, since representations appear to have a non-physical relationship with things that are far away in time and space. This relationship of `aboutness' or intentionality is often called `reference.' While it would be premature to define `reference,' a few examples will illustrate its usage: someone can think about the Eiffel Tower in Paris without being in Paris, or even having ever set foot in France; a human can imagine what the Eiffel Tower would look like if it were painted blue, and one can even think of a situation where the Eiffel Tower wasn't called the Eiffel Tower. Furthermore, a human can dream about the Eiffel Tower, make a plan to visit it, and so on, all while being distant from the Eiffel Tower. Reference also works temporally as well as distally, for one can talk about someone who is no longer living such as Gustave Eiffel. Despite appearances, reference is not epiphenomenal, for reference has real effects on the behavior of agents. Specifically, one can remember what one had for dinner yesterday, and this may impact on what one wants for dinner today, and one can book a plane ticket to visit the Eiffel Tower after making a plan to visit it.

Can we get to the heart of this mystery at the heart of representation and other intentional terminology? The trick would be to define what precisely our common-sense notion of reference is, and to do this requires some terminological ground work while avoiding delving into amateur quantum physics. The terminology here is supposed to reconstruct rather carefully some common-sense demarcations in an uncontroversial yet broad manner so that these terms can deal with a suitably broad range of phenomena, including the Web. To pin the supposed `spookiness' of reference down, we will introduce a few terms. A thing is a general-purpose term used to denote events, objects, and proto-objects in a ``patch of metaphysical flux,'' where a thing can be defined by having some regularity in time and space that can distinguish it from other possible things Smith (1995). A regularity is a lack of difference in time and space at a given level of abstraction. We shall often use the term process interchangeably with things to evoke the dynamic and temporally unstable character of a `thing.' We will also sometimes use the term system when we are emphasizing the fact that one thing can also be, on a different level of abstraction, given as multiple things. This can be considered a mere change of focus, for the term `thing' emphasizes the everyday, solid, and static nature of the ``metaphysical flux,'' while the term `process' refers more to its dynamic aspect Smith (1995). All things and processes are the world. There are generally two kinds of separation possible in processes in a relativistically invariant theory, a physical theory that obeys the rules of special relativity so that the theory looks the same for any constant velocity observer, as processes may be separated in time or space. Things that are separated by time and space are distal while those things that are not separated by time and space are proximal. As synonyms for distal and proximal, we will use non-local and local, or just disconnected and connected. Although this may seem to be an excess of adjectives to describe a simple distinction, this aforementioned distinction will underpin our notions of representation and reference. In figures, local relationships will be marked with a dotted line, while distal (and so possibly referential) relationships are marked with the uniform bold line.

While a discussion about counterfactuals and causation is far beyond our scope, we will rely on the common-sense intuition that if one thing is connected with another thing and a change in the former thing is followed by a change in the latter thing, that former process may have caused the change in the latter process. In other words, the first thing is effective, and the other things that may be effected by a particular thing are within its effective reach. Anything that appears to violate these common-sense intuitions about physics and causation is spooky, while anything that does not is non-spooky. A property of the distal is that it is beyond effective reach; as Smith puts it, ``distance is where no action is at'' Smith (1995). For example, a tourist hitting their toe on the Eiffel Tower has no immediate effect on someone in Edinburgh. With these preliminary terms in hand, we return to the topic of the Web.

The Web has been defined as a ``universal information space'' by Berners-Lee, and we will take this definition seriously and attempt to unravel it, in the hope that it will provide clues on how we can define both `representation' and `reference' in a manner that can do justice to the Web Berners-Lee et al. (1992). The strategy to be employed is to inspect Berners-Lee's evocative notion of the Web as a universal information space in order to provide a less complex notion of information that can serve as the foundation for building the more complex notion of representation. The first question to be answered then is the perennial question: What is information? Although we cannot comprehensively answer this question in full, we can sketch some crucial distinctions.

In order to make progress on defining the Web, we will have to reformulate the notion of information, taking inspiration from Shannon's communication theory while allowing the central concept of information to be grounded in the wider philosophy of language. To rephrase, information is whatever regularities held in common between two things, a source and a receiver Shannon and Weaver (1963). To have something in common means to share the same regularities, e.g. parcels of time and space that cannot be distinguished at a given level of abstraction. This definition correlates with information being the inverse of the amount of `noise' or randomness in a system, and the amount of information being equivalent to a reduction in uncertainty. This preservation or failure to preserve information can be thought of as the sending of a message between the source and the receiver over a channel. Whether or not the information is preserved over time or space is due to the properties of a physical substrate known as the channel. The message realizes on some level of abstraction the information, so we will often call some particular message with some particular information an `information-bearing message.' Already, information reveals itself to be not just a singular thing, but something that exists at multiple levels. In particular, we are interested in two more distinctions in information: that between abstraction and realization, and that between content and encoding.

The first distinction is between the information itself on a level of abstraction, and the particular realization of information. Information is often thought of as an abstraction, and this is true insofar as the same information can be realized by many possible messages. In order to cope with this, a distinction should be made between the information on a level of abstraction from any of the concrete realizations themselves that embody the information at a given juncture in space-time. To use an example, Daniel in Paris (the source) is trying to send a message to Amy (the receiver), a secretary in Boston, that one of her fellow workers, Ralph, has won a trip to the Eiffel Tower. Daniel can send this message in a variety of realizations: e-mail, a letter in the post, or even via a friend who happens to be passing through Boston. The information itself is just the precise physical regularity at a level of abstraction, and these regularities can be embodied by many different possible messages, but these messages are not arbitrary, but must have a certain ability to preserve the regularity - so in the case of Daniel, it's unlikely he could convey his message from Paris to Boston using smoke signals. It would simply not reach the receiver in any recognizable form. So, a level of abstraction is certain physical differences and regularities that can be recognized by an agent and so may have a causal effect on the agent. For example, given a hand-written letter in English, one can focus on the low-level of abstraction, such as the details of the various pen-strokes and the texture of the paper, or progressively higher levels of abstraction, such as recognizing letters in an alphabet, words, or sentences, or even some larger units of discourse that express the thought `Ralph won a ticket to Paris.' To say that some thing realizes the information is of course a realization of the information, which is a the physical thing that realizes the regularities of the information due to its local characteristics, just like a particular information-bearing message but more broadly construed. The concrete voltages down the wire realize an e-mail message, as does a physical book realize some sentences in English. It is common practice to elide various levels of abstraction and just talk about information, but often it is useful to pull apart the abstract pattern of regularities from those physical things in the world that realize them. Since the term `information' is used indiscriminately to refer to information on a level of abstraction and the realization of some abstract information, we will use the term information realization or just realization when discussing a particular realization of information and use the term abstract information on the rare occasion when we wish to emphasize information on a level of abstraction regardless of its particular realization. When the term `information' by itself is used, we are referring to both abstract information and any of its particular realizations.

The second distinction is not as obvious as the distinction between abstract information and its realization: the distinction between the content and encoding of information. Shannon's theory deals with finding the optimal encoding and size of channel so that the message can be guaranteed to get from the sender to the receiver Shannon and Weaver (1963). Yet, how can an encoding be distinguished from the content of information itself? Goodman defines what we would call an encoding as a series of marks, where a mark is a physical characteristic ranging from marks on paper one can use to discern alphabetic characters to ranges of voltage that can be thought of as bits Goodman (1968). To be reliable in conveying information, an encoding should be physically ``differentiable'' and thus maintain what Goodman calls ``character indifference'' so that (at least within some context) each character (characteristic) can not be mistaken for another character. So, an encoding is a set of precise regularities that can be realized by the message. Encodings are usually given these regularities in virtue of being in a language, which is explicated in Section 3.3.

Is our distinction of `encoding' re-stating the difference between abstract information and realization? It is not. Although it would seem that information becomes somehow concrete within a particular region of space-time when it is encoded, on closer inspection, an encoding can still exist on a level of abstraction without being concretely realized in space-time. The term `Eiffel Tower' carries information in an encoding, but it is realized when some speaker uses it in an actual utterance. The text of Moby Dick can be thought of as abstract information, a story about a white whale. The text of Moby Dick in English is an encoding of the abstract information of Moby Dick, with precise regularities given by the very letters of the language. The content of the novel Moby Dick could be encoded in a different language, like French, and the precise regularities that convey the same information at a level of abstraction could be given by different physical characteristics and so different encodings. In the case of French versus English, different words and other linguistic nuances would exist, but the information would - at a level of abstraction, since obviously there are nuances possible in French that do not exist in English, and vice versa - be the same. So even the text of Moby Dick in a particular encoding like English exists at a level of abstraction, as it could be realized in multiple things in space-time, as a copy in English of Moby Dick could be realized by two different physical books, one in Edinburgh and the other in Jakarta. In fact, these realizations could also be quite different, such as a realization of Moby Dick in English as a web-page going down the wire as a particular set of voltages at a given time, and as a particular book on someone's bookshelf.2

There is more to information than encoding. Shannon's theory does not explain the notion of information fully, since giving someone the number of bits that a message contains does not tell the receiver what information is encoded. Shannon explicitly states that ``the fundamental problem of communication is that of reproducing at one point either exactly or approximately a message selected at another point. Frequently the messages have meaning; that is they refer to or are correlated according to some system with certain physical or conceptual entities. These semantic aspects of communication are irrelevant to the engineering problem'' Shannon and Weaver (1963). He is correct, at least for his particular engineering problem. However, Shannon's use of the term `information' is for our purposes the same as the `encoding' of information, but a more fully-fledged notion of information is needed. Many intuitions about the notion of information have to deal with not only how the information is encoded or how to encode it, but what a particular message is about, the content of an information-bearing message. `Content' is a term we adopt from Israel and Perry, as opposed to the more confusing term `semantic information' as employed by Floridi and Dretske Floridi (2004); Israel and Perry (1990); Dretske (1981).

While the notion of an information's content is hard to pin down, it is easy to illustrate. Just determining that a single employee out of eight won the lottery requires at least a three bit encoding and does not tell Amy which employee in particular won the lottery. Only a particular three bits will tell Amy precisely who won the lottery. Shannon's theory only measures how many bits are needed to tell Amy precisely who won. After all, the false message that another office-mate Sandro won a trip to Paris is also three bits. Yet content is not independent of the encoding, for content is conveyed by virtue of a particular encoding and a particular encoding imposes constraints on what content can be sent Shannon and Weaver (1963). Let's imagine that Daniel is using a code of bits specially designed for this problem, rather than natural language, to tell Amy who won the free plane ticket to Paris. The content of the encoding 001 could be Ralph while the content of the encoding 010 could be Sandro. If there are only two possible bits of information and all eight employees need one unique encoding, Daniel cannot send a message specifying which friend got the trip since there aren't enough options in the encodings to go round. An encoding of at least three bits is needed to give each employee a unique encoding. If 01 has the content that `either Sandro or Ralph won the ticket' the message has not been successfully transferred if the purpose of the message is to tell Amy precisely which employee won the ticket.

One of the first attempts to formulate a theory of informational content was due to Carnap and Bar-Hillel Carnap and Bar-Hillel (1952). Their theory attempted to bind a theory of content closely to first-order predicate logic, and so while their ``theory lies explicitly and wholly within semantics'' they explicitly do not address ``the information which the sender intended to convey by transmitting a certain message nor about the information a receiver obtained with a certain message,'' since they believed these notions could eventually be derived from their formal apparatus Carnap and Bar-Hillel (1952). Their overly restrictive notion of the content of information as logic did not gain widespread traction, and neither did other attempts to develop alternative theories of information such as that of Donald McKay McKay (1955). In contrast, Dretske's semantic theory of information defines the notion of content to be compatible with Shannon's information theory, and his notions have gained some traction within the philosophical community Dretske (1981). To Dretske, the content of a message and the amount of information as studied by Shannon are different, for ``saying `There is a gnu in my backyard' does not have more content than the utterance `There is a dog in my backyard' since the former is, statistically, less probable'' Dretske (1981). According to Shannon, there is more information in the former case precisely because it is less likely than the latter and so would require more bits to encode Dretske (1981). So while information that is less frequent may require a larger number of bits in an encoding, the content of information should be viewed as separable if compatible with Shannon's information theory, since otherwise one is led to the ``absurd view that among competent speakers of language, gibberish has more meaning than semantic discourse because it is much less frequent'' Dretske (1981). Shannon and Dretkse are talking about distinct, but intertwined, notions that should be separated, namely the distinction between encoding and content.

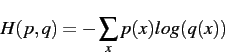

Is there a way to precisely define the content of a message? Dretske defines the content of information as ``a signal ![]() carries the information that

carries the information that ![]() is

is ![]() when the conditional probability of

when the conditional probability of ![]() 's being

's being ![]() , given

, given ![]() (and

(and ![]() ) is 1 (but, given

) is 1 (but, given ![]() alone, less than 1).

alone, less than 1). ![]() is the knowledge of the receiver'' Dretske (1981). To simplify, the content of any information-bearing message is whatever is held in common between the source and the receiver as a result of the conveyance of a particular message. While this is similar to our definition of information itself, it is different. Information can measure the total in common between a source and receiver simpliciter. For example, two distal humans can share quite a lot in common, and so share information, despite never having conveyed a message between each other. The content is whatever is shared in common as a result of a particular message, such as the conveyance of sentence `Ralph won a ticket to the Eiffel Tower.' The content of a message is called the ``facts'' by Dretske, (

is the knowledge of the receiver'' Dretske (1981). To simplify, the content of any information-bearing message is whatever is held in common between the source and the receiver as a result of the conveyance of a particular message. While this is similar to our definition of information itself, it is different. Information can measure the total in common between a source and receiver simpliciter. For example, two distal humans can share quite a lot in common, and so share information, despite never having conveyed a message between each other. The content is whatever is shared in common as a result of a particular message, such as the conveyance of sentence `Ralph won a ticket to the Eiffel Tower.' The content of a message is called the ``facts'' by Dretske, (![]() ). This content is conveyed from the source (

). This content is conveyed from the source (![]() ) successfully to the receiver (

) successfully to the receiver (![]() ) when the content can be used by the receiver with certainty, and that before the receipt of the message the receiver was not certain of that particular content. Daniel can only successfully convey the content that `Ralph won a trip to Paris' if before receiving the message Amy does not know that Ralph won the trip to Paris and after receiving the message Amy does know that fact. To communicate content successfully, both the source and receiver have to be using the same encoding scheme (bits, English, etc.) and the source has to encode the content relative to what the receiver already knows or capacities the receiver possesses. Thus, if Amy does not know who is specified by the term ``Ralph'' given by the encoding scheme, but only knows him as `the guy with the black beard,' Daniel needs to explain in his message the additional fact that the `fellow with the black beard at your office is Ralph.' However, we should interpret the term `certainty' more loosely than Dretske would. Dretkse himself notes that information ``does not mean that a signal must tell us everything about a source to tell us something,'' it just has to tell enough so that the receiver is now certain about the content within the domain Dretske (1981). Millikan rightfully notes that Dretske states his definition too strongly, for this probability of 1 is just an approximation of a statistically ``good bet'' indexed to some domain where the information was learned to be recognized Millikan (2004). For example, lightening carries the content that ``a thunderstorm is nearby'' in rainy climes but in an arid prairie lightning can convey a dust-storm. However, often the reverse is true, as the same content is carried by messages in different encodings, like the message from Daniel to Amy being encoded in either English or French.

) when the content can be used by the receiver with certainty, and that before the receipt of the message the receiver was not certain of that particular content. Daniel can only successfully convey the content that `Ralph won a trip to Paris' if before receiving the message Amy does not know that Ralph won the trip to Paris and after receiving the message Amy does know that fact. To communicate content successfully, both the source and receiver have to be using the same encoding scheme (bits, English, etc.) and the source has to encode the content relative to what the receiver already knows or capacities the receiver possesses. Thus, if Amy does not know who is specified by the term ``Ralph'' given by the encoding scheme, but only knows him as `the guy with the black beard,' Daniel needs to explain in his message the additional fact that the `fellow with the black beard at your office is Ralph.' However, we should interpret the term `certainty' more loosely than Dretske would. Dretkse himself notes that information ``does not mean that a signal must tell us everything about a source to tell us something,'' it just has to tell enough so that the receiver is now certain about the content within the domain Dretske (1981). Millikan rightfully notes that Dretske states his definition too strongly, for this probability of 1 is just an approximation of a statistically ``good bet'' indexed to some domain where the information was learned to be recognized Millikan (2004). For example, lightening carries the content that ``a thunderstorm is nearby'' in rainy climes but in an arid prairie lightning can convey a dust-storm. However, often the reverse is true, as the same content is carried by messages in different encodings, like the message from Daniel to Amy being encoded in either English or French.

In our example, the message that `Ralph won a plane ticket to France' can be encoded in two different languages and still have the same relationship to content. The relationship of an encoding to its content is an interpretation. The interpretation `fills' in the necessary background left out of the encoding, and maps the encoding to some content. In our previous example using binary digits as an encoding scheme, a mapping could be made between the encoding 001 to the content of Ralph while the encoding 010 could be mapped to the content of Sandro. An interpretation requires an interpreter or an agent that is capable of carrying out an interpretation from a particular encoding and a particular content. The word `interpretation' is probably one of the most embattled words, and an in-depth study of its usage far exceeds the scope of this thesis. Somewhat unusually, our usage of the term `interpretation' is as a relationship between an interpreter and some encoding, not a first-order thing itself. This is done on purpose, in order to emphasize the fact that some interpreting agent is needed to actually make the interpretation from some encoding to content. When the word `interpretation' is used as a noun, we mean the content given by a particular relationship between an agent and an encoding. Usual definitions of `interpretation' tend to conflate these issues. In formal semantics, the word `interpretation' often can be used either in the sense of ``an interpretation structure, which is a `possible world' considered as something independent of any particular vocabulary'' (and so any agent) or ``an interpretation mapping from a vocabulary into the structure'' or as shorthand for both Hayes (2004). The difference in use of the term seems somewhat divided by fields. For example, computational linguists often use ``interpretation'' to mean what Hayes called the ``interpretation structure.'' In contrast, we use the term `interpretation' to mean what Hayes called the ``interpretation mapping,'' reserving the word `content' for the ``interpretation structure'' or structures selected by a particular agent in relationship to some encoding. Also, this quick aside into matters of interpretation does not explicitly take on a formal definition of interpretation as done in model theory, although our general definition has been designed to be compatible with model-theoretic and other formal approaches to interpretation.

To uphold our requirement for physical non-spookiness, in order for an interpretation to take place, the interpreter and some realization of the encoding must be connected in some way, such as a human looking at bytes or a machine processing various voltages. In this manner, the examples of interpretation are almost always from particular information realizations in some particular encoding to some particular content. However, the relationship of interpretation is not bound to a particular realization of any information, but also functions at a level of abstraction as well, since obviously many particular realizations of the same abstract information can have the same interpretation. Imagine that Amy is bilingual, and speaks both French and English, so if Daniel had two messages, one in English and another in French, explaining that Ralph has a plane ticket, both messages would have the same interpretation to the same content. So, while information has to be realized concretely in order to be interpreted in a given message by an agent, as many messages can have the same interpretation across many agents, the interpretation is thought to be between the encoding and the content, even when the encoding is at a level of abstraction. So, the single sentence `Ralph won a plane ticket to Paris' may have a single interpretation across many different utterances. However, if the agent and their background information changes, the interpretation may change, as obviously if the e-mail from Daniel was intercepted and read by some secret agent not at Ralph's office, obviously the secret agent may not know who Ralph is while Amy will.

The content of a particular message depends very much on the encoding scheme used by the interpreter. For example, one can interpret the encoding 11 as either the number eleven in the decimal encoding scheme, or the number three in the binary encoding scheme. Unlike many others, including Dretske, we shall make no claims about the nature of information, interpretation, and truth, in particular if what appears to be `false' information is really misinformation or pseudo-information. By remaining studiously neutral on this long-standing debate, our definition of information is suitably vague enough so that even encodings that are interpreted to be `false' still count as information. For example, if Daniel was sending the message to Amy that Ralph had a free plane ticket to Paris as some sort of jest or lie, Amy could still decode and interpret the message, and by filling in normal background assumptions (as Dretske put it, the ``channel assumptions'') she might assume that the message was true Dretske (1981). Amy would still have an interpretation of the content of the message, it would just be different from Daniel's interpretation. In other words, information may always have an encoding and content and nothing forces some information realization to be interpreted to the same content by all interpreters.

Interestingly enough, this opens the door to the possibility of a sender sending an encoding to a receiver that lacks the necessary capacity to decode it. The encoding would not then have an interpretation to content. This would be the standard definition of data, which is information without an interpretation. Our definition works well with other `textbook' definitions of data and information, such as that of Davis and Olson, which states that ``information is data that has been processed into a form that is meaningful to the recipient'' Davis and Olson (1985). This does not mean that the encoding does not possibly have an interpretation, but at that given moment it cannot be interpreted. One example would be if the message from Daniel that Ralph had won the plane ticket had been delivered via e-mail in French. While Amy could have been aware of some very limited aspects of the e-mail (such as the time sent and the sender), she would lack the necessary knowledge of French to decode the message's content and so to have an interpretation of the message. In this manner, the e-mail from Daniel, while having a definite interpretation for French speakers, would lack an interpretation for Amy. To Amy, the message would just be data. Of course, Amy could learn French and eventually read the message, or send it to a machine-translation program, or ask a French speaker to translate the message for her, and so could eventually transform the encoding from data to information. One can also imagine cognitive constraints leading to a lack of an interpretation. For example, the volume of data gathered by modern telescopes is absolutely enormous, so large that much of it lies as uninterpreted reams of data rather than information for scientists, as it is beyond a single human to interpret this data, and even groups of humans trying to interpret it in a distributed manner are still struggling to catch up with the volume of data produced by the telescopes.

These terms are all illustrated in Figure 3.1. A source (Amy) is sending a receiver (Daniel) a message. The information-bearing message realizes some particular encoding such as a few sentences in English and a picture of the Eiffel Tower, and the content of the message can be interpreted to be about the actual Eiffel Tower.

Information, which appeared so simple, is now revealed to be a multi-faceted phenomenon. To summarize, information is what is held or could be held in common between a sender and a receiver. Information is always thought of at a level of abstraction, and so abstract information can be realized concretely by some realization, like a particular message. Information, on both the level of abstract information and a particular realization, has two sides: encoding and content. The encoding is the precise regularities that can convey the information in a particular message, while the content is what is in common between the receiver and the sender as a result of the conveyance of a particular message. The thought `Ralph won a plane ticket to Paris' is the content, given an encoding in English by Daniel, and realized as some bits sent over the wire to Amy. These notions of encoding and content are not strictly separable, which is why they together compose the notion of information. An updated famous maxim of Hegel could be applied to the new-fangled concept of information: There is no encoding without content, and no content without encoding Hegel (1959). In a similar vein, while we can imagine there being information without any realizations, we only know information through its concrete realizations.

The notion of interpretation implies the transfer of an encoding and an act of the interpreter that relates that encoding to content, nothing more. When Daniel sends Amy the e-mail to tell her Ralph had a plane ticket to Paris, Amy interpreted the message by filling in various background information, and so determining that Ralph at her office has a plane ticket to Paris. Amy has successfully interpreted the message. The effect upon an agent of an interpretation of some encoding is difficult to visualize, and one attempt that resonates is the notion of assertoric content given by DummettDummett (1973). Ignoring his larger project, we can simply say one way to tell if an agent has interpreted an encoding to some content is that the agent would `assent' to various questions about this content. So, if Daniel asked Amy if she got the message about Ralph, minimally she should assert that she did, and if she does not, then perhaps she did not get the message.