The Versatile Batchop

| Author : | Brian S. Julin |

| Revision : | 1.1 |

| Revision date : | 2004-09-18 |

A "batchop" is essentially a format descriptor for data which is organized in arrays. The mmutils library defines batchops and ways to operate on the data contained in these arrays.

A bird's eye view of batchops

The batchop structure is a holder for an array of "batchparm" structures. It contains a "numparms" member which says how many batchparm structures are in the array, which is located through the pointer member "parms". If you consider the batchop to be like a spreadsheet full of values, a batchparm is like a column header.

The batchop structure also contains two indexes: one for writing input into the data areas which the batchop manages and one for reading output from the data areas. Thinking again in spreadsheet terms, they can be thought of as referring to a row in the spreadsheet. They are used to contain the state of a transfer between two batchops. Since this state is contained inside the batchop, no batchop can be involved in more than one output or input operation at the same time. However, it is possible to transfer data into a batchop's area at the same time as data is being transferred out of the batchop's data area -- this must be done with care that access to the data does not collide.

The high level functions can be explained without going into further detail. Let us assume that you have two batchops names bsrc and bdest, which have already been created for you (we will cover how batchops are created later.) Assume also that bsrc is managing some data areas that are already full of data, and bdest is managing areas that you want to transfer the data into.

The first step in transferring data is to call the macro bo_match(bsrc, bdest). This will study the two batchops and fill out some internal values in bdest that will speed up the data transfer. Because this state is kept in bdest, it is possible for you to do this:

bo_match(bdest, bsrc); bo_match(bdest2, bsrc);

...now bdest and bdest2 are both prepared to receive data from bsrc. But it does not work the other way around -- you cannot do this:

bo_match(bdest, bsrc); bo_match(bdest, bsrc2); /* Wrong! */

...because after this, bdest will be prepared to receive data from bsrc2, not from bsrc. The second step is to set the read and write indices on your batchops. The function bo_set_in_idx() will set the destination's write index to point to any given "row", and the function bo_set_out_idx() will set the read index on the source:

/* Copy starting from row 40 of bsrc to bdest starting at row 5 */ bo_set_in_idx(bdest, 5); bo_set_out_idx(bsrc, 40);

Row values start at zero. There are also bo_inc_*_idx functions which will move to the next row in the batchop, but you will rarely have a use for those. Make sure to set the index of a batchop before use: doing so ensures that the internal state is consistant.

Now we are ready to copy data. This is as simple as calling the macro bo_go:

bo_go(bdest, bsrc, 6); /* Copy 6 "rows" of data from bdest to bsrc */

If you are in a threaded program, you can check the progress of the copy from another thread with the macro bo_at(bsrc).

Simple, eh? But what's really going on, and why is it useful? Read on...

A closer look

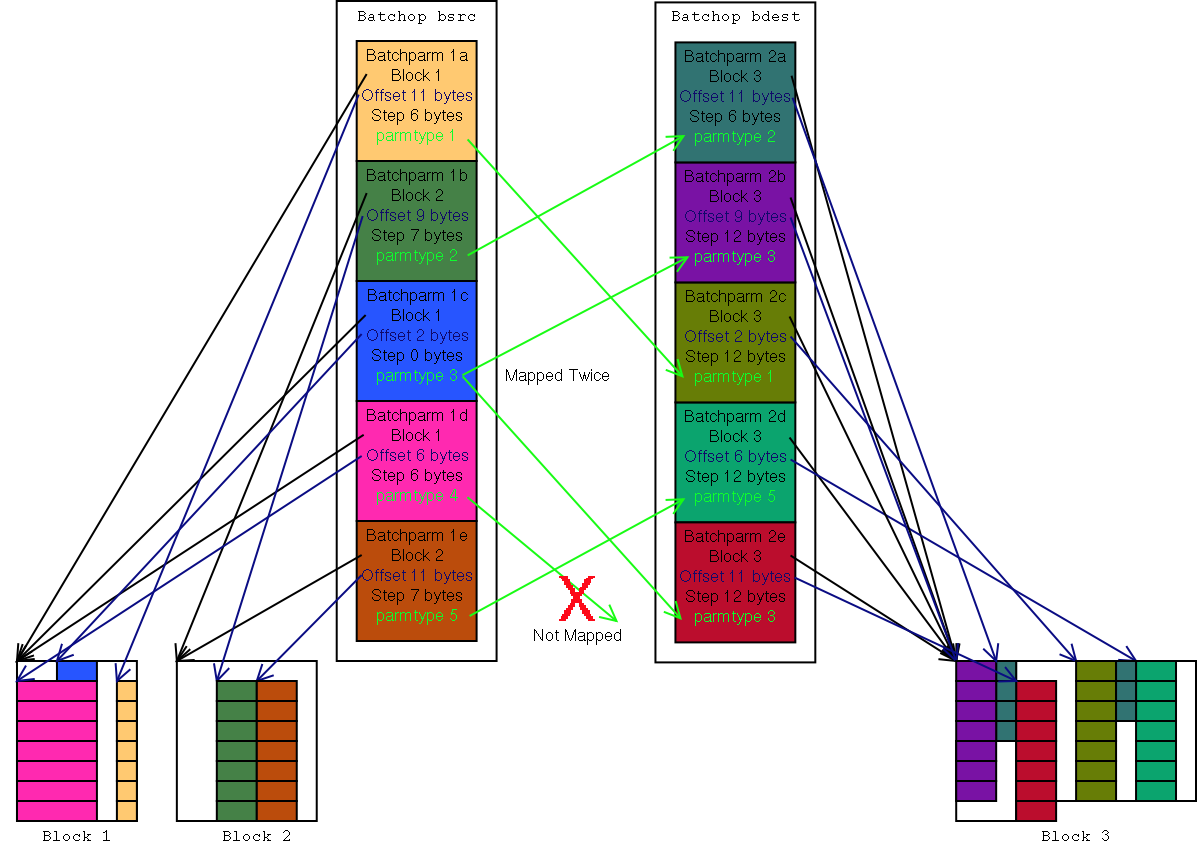

Take a look at Figure 1, at the green lines in the center (my apologies to the color-blind.) The figure depicts bsrc and bdest and shows the batchparms which they contain. Each batchparm has a "parmtype". When the bo_match macro is called, the parmtypes are compared and source batchparms are mapped to destination batchparms. So, as long as the bsrc contains at least one batchparm of the same parmtype as any given batchparm in bdest, it will receive values from the matching batchparm in bsrc.

There are some rules as to how this matching takes place.

- Look at batchparm 1d. It has parmtype 4, but there are no batchparms in bdest that have parmtype 4. So the values managed by batchparm 1d are ignored, and will not be copied into the data area managed by bdest.

- Now look at batchparm 1c. It has parmtype 3, and so do batchparms 2b and 2e. In this case, the data managed by batchparm 1c is duplicated into the data areas managed by batchparms 2b and 2e.

- If no match is found for a batchparm in bdest, then no values are copied into the data area managed by that batchparm.

- If more than one match occurs for a batchparm in bdest, the first one found in bsrc is used and any further ones are ignored.

- The order in which the data is copied is the same as the order of the batchparms in bdest. In Figure1, first a value is copied from the column managed by batchparm 1b into the column managed by batchparm 2a. Then data is copied from 1c to 2b, then 1a to 2c, then 1e to 2d, then 1c to 2e.

Now let us take a look at the black and blue arrows in Figure1. They show how a batchparm points to data in the managed data areas. In this example, bsrc is managing two blocks of data. Block 1 contains data columns managed by batchparms 1a, 1c, and 1d, and Block 2 contains data columns managed by batchparms 1b and 1e. The batchop bdest is only managing one data area, Block 3. Block1 and Block2 can be located anywhere in memory, and Block3 could even, if it were structured right, overlap with Block1 and Block2. Batchops are thus a handy way to merge arrays.

The "block" fields in the batchparms point to a data area, and the "offset" fields point to the first value in the column that the batchparm represents. A field which is not shown in the diagram, the "datasize" field, tells how many bits the values in the row contain. It is easy to guess that from the width of the columns in the diagram.

The "step" feild tells the distance between rows in this column. Note the batchparm 2a in the diagram. This has a different step value than the rest of the batchparms in bdest, and as a result, the data it represents is distributed differently inside Block 3. This would actually be an unusual batchop, but it does demonstrate the flexibility of batchops.

Now note the batchparm 1c. This has a "step" value of zero, which is a special case that designates a constant. Because of this, the columns managed by batchparms 2b and 2e will be filled with the value from that one memory location. (The beauty of that is that it is not actually a "special case" at all, because the step value can be added normally between rows and, being 0, ends up pointing to the same place.)

Adjustments, translation, and the real story of mapping

You may have already noted in the diagram in the last section that the sizes of some of the columns do not match (for example, the 8 bit column managed by batchparm 1a is mapped to the 16 bit column managed by batchparm 2c.) This isn't a mistake. Batchops are meant to provide not only raw data copy operations, but also to do very basic translation between different data formats. The available translations supported (so far) are:

- right or left justified integer resizing

- bitwise inversion

- integer negation

- integer test (< , <=, ==, =>, >, !=)

- endian and bitswap translations of the block

- endian and bitswap translations of the field

In addition, though the diagram depicts only byte-aligned values of size 8, 16, and 32bits, the batchparm "step" and "size" values are actually given in bits, not in bytes, so bitwise operations are also supported.

Now for a confession: what was said earlier about how parmtypes are matched was a bit misleading. In fact, it is not merely the parmtype that is compared -- the data sizes, bit ordering, and sense of the parameters is compared to find the "best match" (the batchparm that loses the least accuracy, and in the event of a tie on that criterion, the one that requires the least number of operations to perform the required transfer/conversions is chosen even if it is below another batchparm in the source batchop.)

Batchop optimization

Hold on, you say, now that you bring up efficiency, the code to bit shift values from a column, decide whether to perform the various conversions, deal with endianness issues, and then bit-shift and mask it back into the destination column will either be a morass of flow control statements, or horribly suboptimal. We'd get more speed by just writing optimized code for the simpler case, right?

Well, we do optimize, but instead of optimizing off-the-cuff, we write interchangable, reusable optimizations. Looking back at how batchops are set in motion we mentioned the macro bo_go(). This macro is defined as follows:

#define bo_go(src, dest, n) \ ((dest->go == NULL) ? bo_def_go(src, dest, n) : dest->go(src, dest, n))

Calling the bo_match() macro not only matches the batchparms to each other, it also examines both the source and the destination batchop and loads the best available bo_go implementation for the given combination of batchops onto the destination batchop's "go" hook. So if, for example, the two batchops contain only byte-aligned little endian values, they are processed by a slimmed down, optimized implementation that does not sacrifice speed for flexibility. (And yes, the default "handle anything" function, bo_def_go, is indeed a suboptimal morass of flow control statements, but at least it is there as a fallback.) Surprise: it does not end there. Look at the definition of the bo_match() macro:

#define bo_match(src, dest) \ ((dest->match == NULL) ? bo_def_match(src, dest) : dest->match(src, dest))

This is the capper which allows three extremely powerful things to happen. One is for optimized implementations to be mixed in from other codebases -- application and library developers are not limited to the generic set of implementations available in the mmutils mini-library. Another is that specialized translations can be added -- for example, translation of x,y,w,h values to x1,y1,x2,y2 coordinates on the fly. Finally, it allows for pseudo-batchops which do not store the values written to them, but rather use them as commands and parameters to perform operations as each row is written.

A final detail: transient batchparms

What do you do if the destination batchop's managed area is write-only? Consider what happens if you are trying to load two four-bit batchparms from bsrc to the same write-only byte in bdest. If it is done naively, then the first value to be written will be zeroed out or otherwise mangled by the second value, or worse -- the attempt to read the old value out of the destination area in order to merge the unaffected bits could cause unforseen results like segmentation violations, corruption, or system hangs if directly accessing a hardware MMIO region.

To cope with this eventuality, a batchop may contain a special batchparm called a "transient". A transient batchparm provides a "transient block" where other batchparms can read/write their data, instead of reading directly from a batchparm in the source batchop or writing directly to a batchparm in the destination batchop. For example, instead of having batchparms "3a" and "3b" which put interleaved 4-bit values directly into one of the "real" data blocks, the batchparm is given step 0 and pointed at location in the transient block, and later, when the transient batchparm itself is reached, the 8-bit combined value is pulled from the transient block and put into the real data block.

Use of transient batchparms is not just for write-only memory. They may also to aid in optimization of the batchop "go" operation, to avoid redundant shifting and comparison of the data occupying the same bytes as the transferred data. As such, transient batchparms may contain a flag that causes the current contents of the final destination byte(s) to be read into the transient block before any values are transferred to/from the transient block. Using them is encouraged. This flag must be set if the batchop in question will be acting as a source in addition to a destination.

Naturally, a transient batchparm should be positioned lower in the batchop than any batchparms that refer to it, such that it is written last, after the other batchparms have written into the transient block.

Coding a source batchop

There are two levels at which to code with batchops. The first is when you simply want to create a source batchop. In this case, all you have to do is call bo_calloc to allocate a new batchop. It takes as arguments the number of batchparms the batchop should contain, and a default value for the .block member of the batchparms. It returns a pointer to your new batchop, or NULL if there was a failure to allocate memory.

Once you have this, you should start by setting the .block and .offset of the first item in a data column into the batchparm that will represent the column. Note that the value in .block is a normal C pointer, and must be aligned to a 64 bit boundary. The value in offset is given in bits, and should not be greater than BO_BP_OFFSET_MAX or less than BO_BP_OFFSET_MIN; if it is, then you will have to point .block closer to the beginning of the column.

Now, set the datasize of the batchparm to say how many bits wide the values in your column are and the step designating the number of bits between values (between the first bits in two consecutive values, that is, e.g. it would be 8 if you were indexing a "row" of consecutive characters.) The step must be no more than BO_BP_STEP_MAX and no less than BO_BP_STEP_MIN (which is negative, so you can step backwards through memory) Once you have set your blocks, offsets, sizes and steps on all your batchparms, you should call bo_set_in_idx and bo_set_out_idx to get the internal fields of the batchop correctly initialized.

Now assign the batchparm a type: types are application specific and will be defined either by you for use with a destination batchop you are writing, or in the headers of a library which is using mmutils (e.g. various extensions to LibGGI, for which mmutils was originally developed.).

There are all sorts of flags available to modify the parmtype. If the value is signed, OR the parmtype with the BO_PT_SINT flag value. If the value should be left justified when moved to a larger data size, set the BO_PT_JUSTIFY_LEFT (this will also affect the truncation behavior if the data is moved to a smaller datasize.) You may set the BO_PT_INVERT flag to have the value bitwise inverted, e.g. for an active-low bit.

In addition, there are operations that may be performed on the batchop that take a constant argument, represented by the 64-bit integer batchparm member .arg, which is available in signed (.arg.u64), and unsigned(.arg.s64) flavors, depending on whether you set the BO_PT_SINT flag. These operations only occur on the parameter when it is being read out of a source batchparm. You may choose from several "adjuster" operation values: to add .arg to the value, use BO_PT_ADD, or compare it (e.g. with BO_PT_CMP_LTE) to produce a boolean result, or you may negate the value by placing the value 1 in the .arg member and or'ing the value BO_PT_NEGATE.

Finally, we get down to the lurid business of endianness. There is a .endian member in the batchparm containing two sets of flags to deal with the horrible mess that is endianness. You may not have to touch it. Basically you do not have to touch it (that is, you should leave it set to 0) if all your batchparms are either 8, 16, 32, or 64-bit values aligned on 8, 16, 32, or 64 bit boundaries respectively.

The first set of flags applies to the block the data is stored in. If this block needs to be reordered you can use the BP_EB_RE_* flags to reverse the bit or byte ordering. Note that each of those flags means only to swab chunks of that particular size, so, for example, translating from 32-bit little endian to 32-bit big-endian or visa versa is not done by setting the flag BO_EB_RE_32, but rather by setting the flags of the chunk sizes to be swapped: BO_PT_RE_16 | BO_PT_RE_8. Make sure to get the block endian flags right, or your data will be horribly munged -- and, when you set them, make sure that the area pointed to by your data block is accessible when padded out to align with the first and last possible location of your column values.

The second set of flags pertains to the feild in the block, after the block itself has been un-munged. If you were to memcpy/bitshift the data in your feild into the smallest C integer data type that would contain it, would you then have to swab any nybbles/bytes/words to get the value contained in the C integer to match the value the field is supposed to contain? In that case, set the appropriate BP_EP_RE_* flags on this batchparm.

Finally, if the block has restrictions on the data access widths used to read it, you will want to set the BP_ACCESS_* flags for each of the supported access widths. (The default value, 0, is a special case that means that all access widths are supported _and_ that it is OK to copy bytes which do not contain part of your column, if it is more efficient to do so than to skirt around those bytes. This is the appropriate setting for normal system RAM.)

Great, so now you can go create a hairy bastard 2 and 4-bit endian reversed 17-bit-wide 25-bit-step column of data that gets the value 15 added to it, with no cares, right? Well, keep in mind that someone is writing the source batchop and its associated match/go functions, and they are probably optizing more for the common cases. If you do something contorted, you'll probably be shunted through a less optimized "go" function, and won't get the speed you were hoping for. Use common sense when generating data to be fed through a batchop; don't rely on them to do work you can do when first filling your block with data. Or, in some cases, you don't have to depend entirely on common sense: if you have been provided the handle to, or copy of, your destination batchop it is considered fair usage to look inside and customize the data you generate to be easily convertable to the values which the destination batchop is expecting.

Coding a destination batchop

This section of the docs is not complete, as the codebase is still in the process of converging (been back to square one a few times so far.)

A "destination batchop" is usually more than just a batchop structure as described above... unless you are satisfied with a pre-existing set of match/go functions you will want to write your own. The mmutils library contains numerous conveniences to help with that task.

Probably the most useful tool in mmutils is the bo_summarize function. This can be run on both the source and destination batchops to give a rough idea of how simple the batchparms contained inside them are. The bo_summarize function expects a pointer to a bo_summary structure, which you will have to allocate. See the comments in the mmutils.h file to understand what the returned values mean. There is also a bo_summarize_pair function which returns some additional information about any given combination of source/destination batchops.